Joint Tracking of Features and Edges

Stanley

Overview

Tracking features between consecutive image frames is a fundamental

problem in computer vision. Previous approaches to feature tracking

consider each feature independently of the other features, thus neglecting

important information that is available in determining the motion of a feature.

We have developed a framework in which features are tracked jointly so that the estimated motion of a feature

is influenced by the estimated motion of neighboring features. The approach

also handles the problem of tracking edges in a unified way by estimating

motion perpendicular to the edge, using the motion of neighboring features to

resolve the aperture problem.

Differential methods for sparse feature tracking are based on the well-known optic flow constraint equation f(u, v; I) = Ix u + Iy v + It = 0, where the subscripts denote the partial derivatives of the image I, and (u,v) are the displacement of the pixel between the two frames. The well-known aperture problem arises because this single equation is insufficient to recover the two unknowns u and v. The Lucas-Kanade approach to overcoming the aperture problem assumes that the unknown displacement of a pixel is constant within some neighborhood. Alternatively, the Horn-Schunck approach usually utilized by dense optical flow methods regularizes the underconstrained optic flow constraint equation by imposing a global smoothness term. While Lucas-Kanade finds the displacement of a small window around a single pixel, Horn-Schunck computes the global displacement functions for estimating the motion.

We combine the ideas of Lucas-Kanade and Horn-Schunck so that, instead of aggregating local information to improve global flow, global information is aggregated to improve the tracking of sparse feature points. Because of their sparsity, the motion displacements of neighboring features cannot simply be averaged as is commonly done. Rather, an affine motion model is fit to the neighboring features, and the resulting expected flow vector is used in performing the Newton-Raphson iterations for computing the displacement of a particular feature. By incorporating off-diagonal elements into the otherwise block-diagonal tracking matrix, significantly improved results are obtained, particularly in areas of repetitive texture, one-dimensional texture (edges), or no texture.

Results

A comparison of our algorithm against the state-of-the-art implementation of pyramidal Lucas-Kanade in the OpenCV library is shown in the following table. Both algorithms were run with identical parameters on four image sequences from http://vision.middlebury.edu/flow for which ground truth is available. In all cases, the joint tracking algorithm considerably outperforms the traditional approach, oftentimes reducing the error by nearly half. Also note that the errors of the joint tracking algorithm are significantly less than the leading dense optical flow algorithms, which achieve 9.26 (AE) and 0.35 (EP) on Dimetrodon and 7.64 (AE) and 0.51 (EP) on Venus -- see the Middlebury website.

|

Algorithm |

Rubber Whale |

Hydrangea |

Venus |

Dimetrodon |

||||

|

AE |

EP |

AE |

EP |

AE |

EP |

AE |

EP |

|

|

Standard LK (OpenCV) |

8.09 |

0.44 |

7.56 |

0.57 |

8.56 |

0.63 |

2.40 |

0.13 |

|

Joint LK (our algorithm) |

4.32 |

0.13 |

6.13 |

0.45 |

4.66 |

0.25 |

1.34 |

0.08 |

The average angular error (AE) in degrees and the average endpoint error (EP) in pixels of the two algorithms.

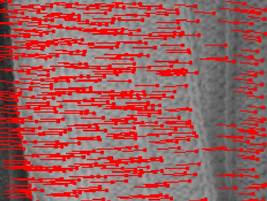

The figure below displays the results of the two algorithms on these image pairs. In general, the joint tracking algorithm exhibits smoother flows and is thus better equipped to handle features without sufficient local information. In particular, repetitive textures that cause individual features to be distracted by similar nearby patterns using the traditional algorithm do not pose a problem in our algorithm.

| Image | Standard LK (OpenCV) | Joint LK (our algorithm) |

| Rubber whale |

|

|

| Hydrangea |

|

|

| Venus |

|

|

| Dimetrodon |

|

|

Our algorithm exhibits fewer erroneous displacements in regions of repetitive texture.

A close-up of the repetitive texture from the top-right corner of the Rubber Whale image shows the improvement:

Image |  Standard LK (OpenCV) |  Joint LK (our algorithm) |

The difference between the two algorithms is even more pronounced when the scene does not contain much texture, as is often the case in indoor man-made environments. Click on the images in the bottom row for a video of the results.

Image |  Gradient magnitude |

Standard LK (OpenCV) |  Joint LK (our algorithm) |

Publications:

·