This problem is similar to the one of detecting depth discontinuities from a stereo pair of images, but it is harder for a number of reasons. First, the motion field is vector-valued, compared with the scalar-valued disparity map. Also, the epipolar constraint no longer holds, so the search is two-dimensional rather than one-dimensional. Even more problematic is the possibility of nonrigid motion. Finally, we are now dealing with multiple images, where each new image provides not only redundant information about the current scene, but also introduces new variables into the equation.

|

|

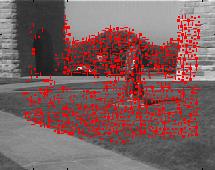

Our approach has been to track a large but sparse number of feature points (using the Kanade-Lucas-Tomasi feature tracker) throughout the image. In this way we avoid wasting time computing motion in areas where the answer is fairly obvious, such as between two features, or in areas where there is insufficient information, such as the untextured sky below. Untextured regions are not as easily handled in motion sequences as they are in stereo images.

|

|

As you can see from this video (MPEG, 8.4 MB), these features alone contain enough information to determine the structure of the scene: to segment the objects from each other, and then to detect the discontinuities. Regions move about on the image plane much in the same way that surfaces on the earth move about, to use Prof. Tomasi's analogy with plate tectonics theory. They slide past one another along a motion discontinuity, and where they collide, one surface disappears behind the other, causing occlusion. Where they separate, new parts appear from behind the other surface, causing disocclusion.

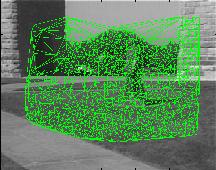

One way to examine these phenomena is to triangulate the feature points with a Delaunay triangulation, and then to view the deformation of these triangles or edges over time. This leads to the idea of image strain, which, to borrow the definition from mechanics, is a measure of the fractional deformation. Where the strain is high, the triangles or edges are changing shape, and where it is low, they remain relatively constant.

|

|

In theory, high strain indicates the presence of a discontinuity. To reduce sensitivity to noise, instead of measuring the strain directly, we first group the features using a fast algorithm that we have developed. A feature is selected at random, and the features around it that have similar motions are accumulated, forming a region consisting of features with similar motion. After this, another feature is selected and the process is repeated. With little computation, this algorithm is able to produce fairly good results, as shown here. The statue is detected, and the ground plane is separated from the background. Here, even the clump of trees evident in the video is correctly segmented from the rest of the background. Similarly, the basketball player is separated from the crowd.

|

|

Once we have these groups, it is fairly straightforward to trace the high-strain edges between the groups to get the discontinuities shown here. The discontinuities around the statue are correctly detected, as well as the boundary along the wall, and between the two clumps of trees. The mistake of joining the two vertical discontinuities is due to the lack of texture in the clear California sky. Similarly, the discontinuities around the basketball player are found. Although the elbows are missing because the feature tracker does not do well in this area, the basic outline of the player is found.

|

|

|

By fitting the discontinuities to the intensity edges or using other criteria, these boundaries could be further refined.