The ability to automatically follow a person is a key enabling technology for mobile robots to effectively interact with the surrounding world. We present the Binocular Sparse Feature Segmentation (BSFS) algorithm for vision-based person following with a mobile robot. BSFS uses Lucas-Kanade feature detection and matching in order to determine the location of the person in the image and thereby control the robot. Matching is performed between two images of a stereo pair, as well as between successive video frames. We use RANSAC for segmenting the sparse disparity map and estimating the motion models of the person and background. By fusing motion and stereo information, BSFS handles difficult situations such as dynamic backgrounds, out-of-plane rotation, and similar disparity and/or motion between the person and background. Unlike color-based approaches, the person is not required to wear clothing with a different color from the environment. Our system is able to reliably follow a person in complex dynamic, cluttered environments in real time.

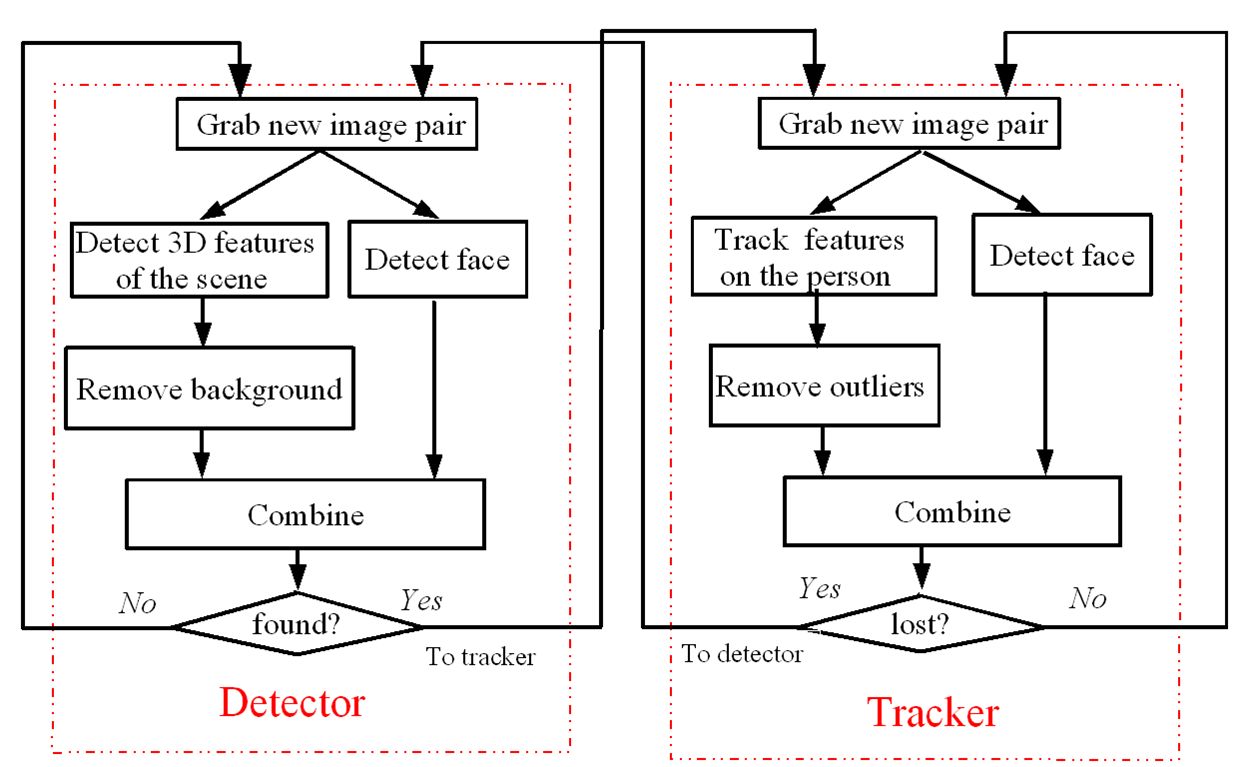

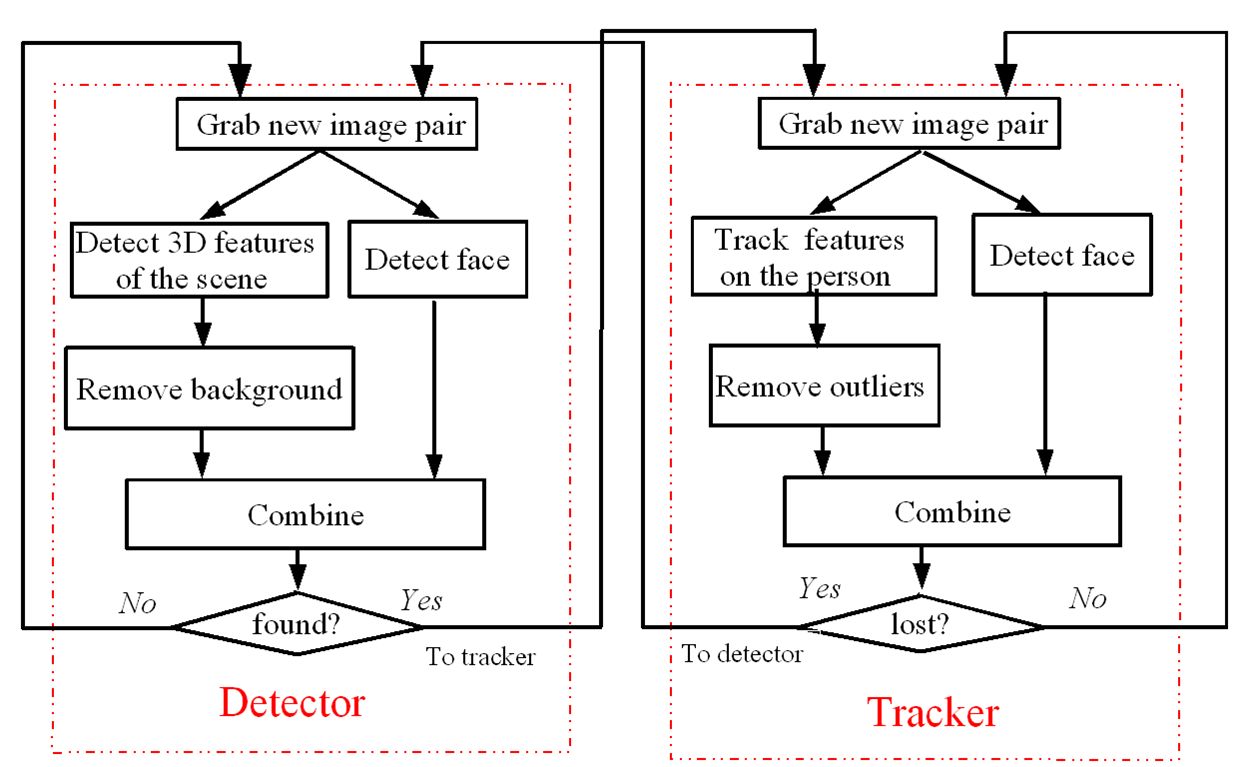

The system consists of a pair of forward-facing stereo cameras on a mobile robot. The algorithm for processing the binocular video, shown in the figure below, consists of two modes. In detection mode, sparse features are matched between the two images to yield disparities, from which the segmentation of foreground and background is performed. Once the person is detected the system enters tracking mode, in which the existing features on the person are tracked from frame to frame. Features not deemed to belong to the person are discarded, and once the person has been lost the system returns to detection mode. In both modes the results of the feature algorithm are combined with the output of a face detector, which provides additional robustness when the person is facing the camera.

Experimental Results:

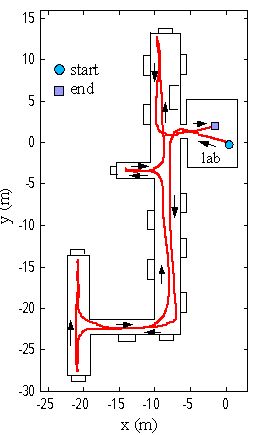

The algorithm has been tested extensively in indoor environments and moderately in outdoor environments. The figure below shows a typical run of the system, with the robot traveling over 100 meters. To initialize, the person faced the robot, after which the person walked freely at approximately 0.8 m/s, sometimes facing the robot and other times turning away.

Video:

Publication:

Z. Chen and S. T. Birchfield, Person Following with a Mobile Robot Using Binocular Feature-Based Tracking. Proceedings of the IEEE International Conference on Intelligent Robots and Systems (IROS), San Diego, California, pages 815-820, October 2007.